Autonomous Robots

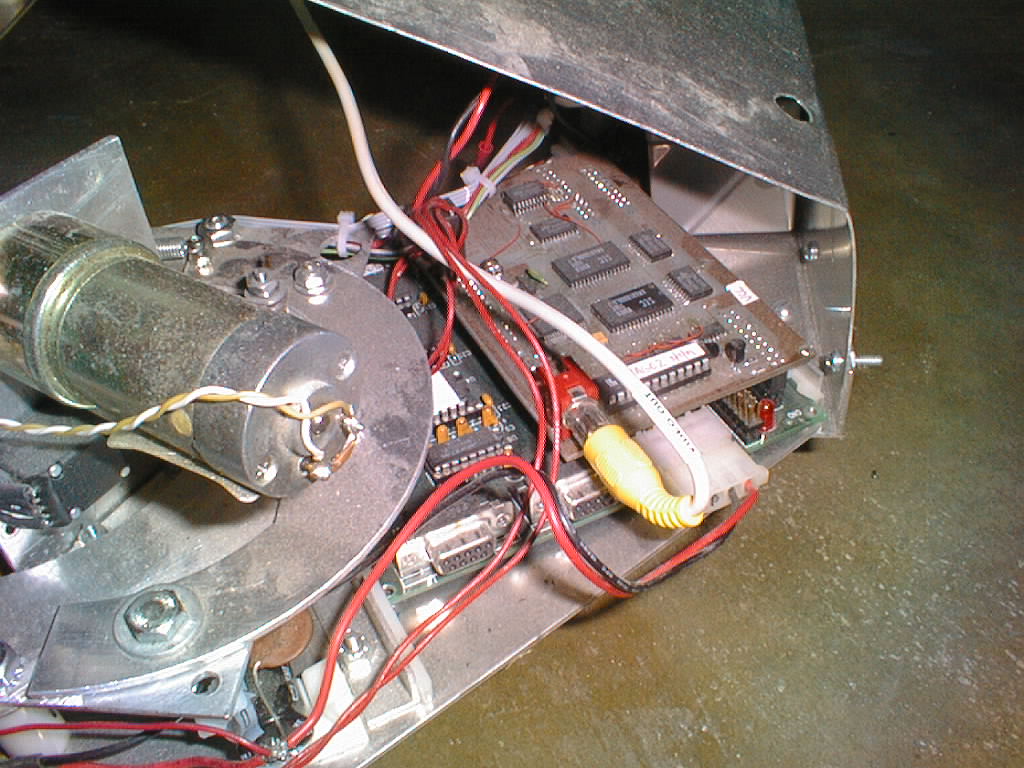

This senior project was built for the University of Wisconsin–Madison’s first autonomous robotics competition, where two fully autonomous robots played soccer. My robot used an onboard camera for ball tracking, custom motor control via an H-bridge, servos for ball capture, an IR sensor to confirm possession, and a stepper-driven steering system. For its time, the combination of real-time vision, sensing, and electromechanical control was truly state-of-the-art.

Just a Few Tweeks...

Here I am the night before the competition adjusting the vision system to recognize the field (green), the goal posts (red), and the soccer ball in the arena under expected lighting conditions.

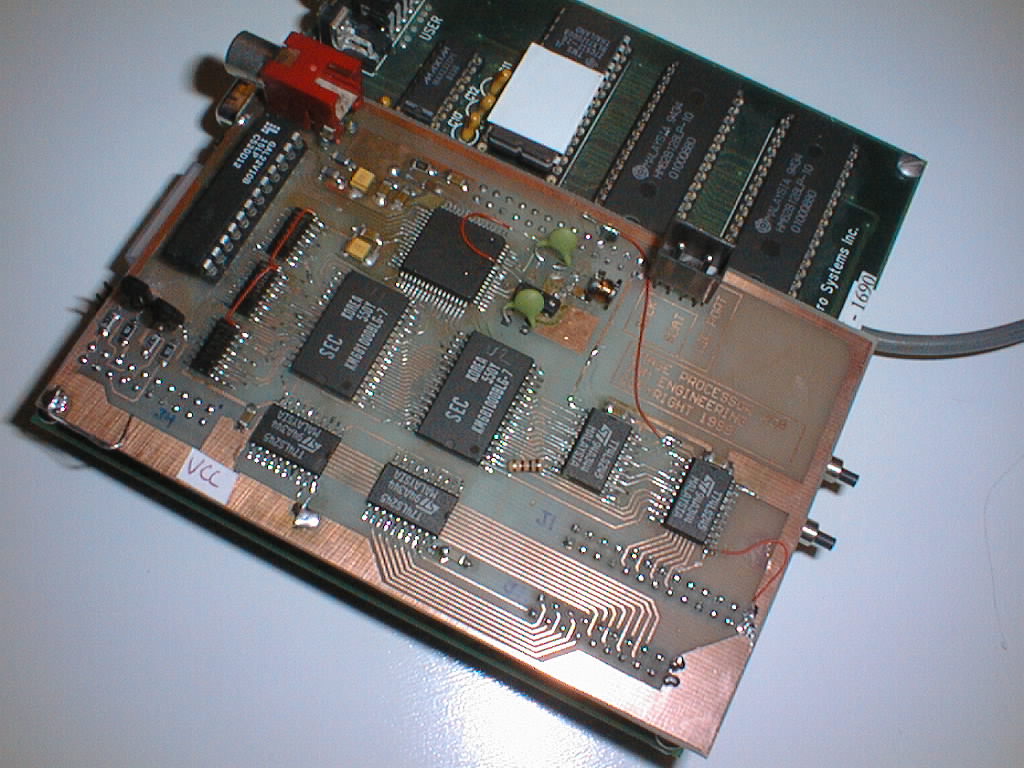

Frame Grabber

I designed the schematic, laid out the PCB, etched, drilled, and assembled this surface-mount frame grabber board — an ambitious student build in 1999. With digital cameras still out of reach, the system used an NTSC camera and a frame-grabber IC controlled by GAL logic (a precursor to modern FPGAs). On command, the GAL captured an image into shared memory, where the CPU accessed it for vision-based targeting and navigation.

Guidance, Navigation & Control

The core navigation loop followed a preplanned search pattern until the vision system detected the black-and-white soccer ball. The robot advanced until an IR sensor confirmed capture, then closed its arms to secure it. Next, it performed a 360° visual sweep to locate the two red goal posts, aligned itself, and drove forward until they reached the correct spacing in view. Once in position, it executed a preprogrammed shooting maneuver.

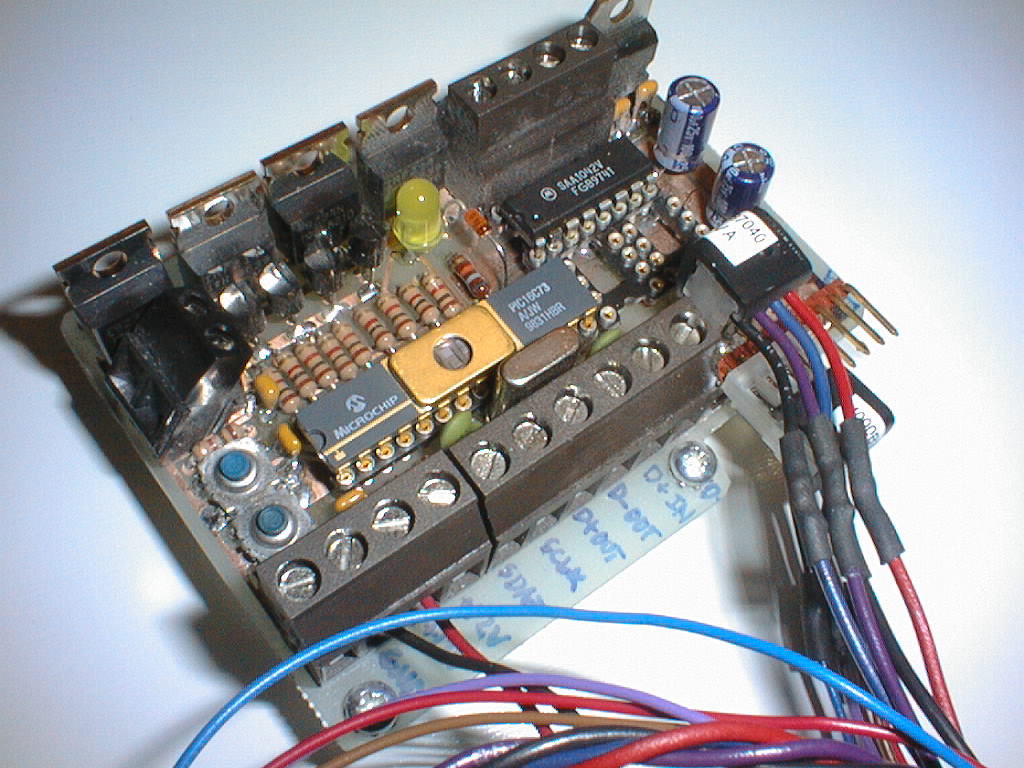

Motor Control & Steering

This was based on an early microcontroller, a PIC16C73 to be exact. You'd program it in assembly, yes assembly. Debugging was usually done with a blinking LED and to erase the microcontroller you would place it under a UV light for 10 minutes.